A Curious and Terrified Marketer’s Start to AI and Predictive Analytics – Pt 2

Summary

Continued from their previous blog, a marketing consultant novice to AI, experiments with a machine learning platform's free trial to see if performing predictive analytics is as easy in application for new users as it sounds in theory.

By Sarah Threet, Marketing Consultant at Heinz Marketing

In my previous blog, I shared how I wanted to begin to demystify AI for myself by looking into how it can be used for predictive analytics. I learned the high-level information given to me in laymen’s terms and felt convinced that perhaps some of these platforms were so user friendly that just about anyone with a sense of data could use them. I proceeded to test that assumption in a free trial with DataRobot (no affiliation with this blog – my research suggested that their platform was user friendly for novices). I am a little bummed to share that my DataRobot experiment was not so successful. This isn’t the fault of DataRobot rather that I require more background and contextual education to use this kind of application in my use cases.

I’ve concluded I’ll need to learn several concepts and new language before the platform can be “user friendly” to me. Although the research I did claims this platform is relatively user friendly, I cannot yet compare it to other machine learning platforms, so I am not certain they are comparatively the most user friendly.

Business Case Walkthrough

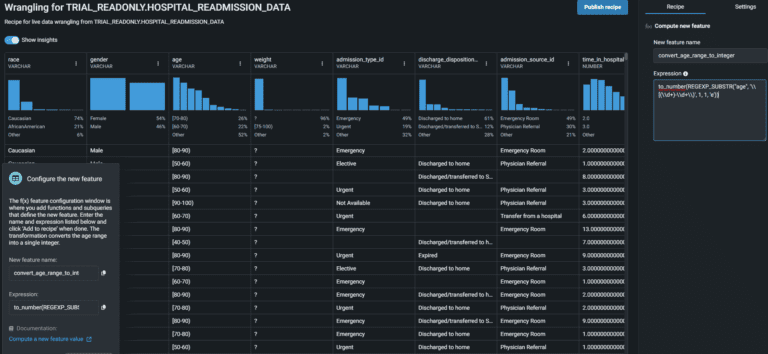

When I opened “Workbench” (where you run your models in the platform), I didn’t know where to start. Thankfully, DataRobot has a pop up for a case study walkthrough. Their business case examples were useful; it helped to work through modeling a set of data with a real-life goal in mind that was, in this case, to predict the likelihood of a patient being readmitted. I thought these “business accelerators” were a nice feature of this platform for learning and application purposes.

Unfortunately, the walkthrough hops in at a point in the modeling where the data is already cleaned, the model selected, the data split and model trained. That made me realize very quickly that I wasn’t certain how to clean a set of data for a particular type of model, and I wasn’t sure how much training would be involved – or how to even execute. At a high level, it makes sense you would keep feeding the model data to teach it and test it, but how to feed, tweak, and test… is a different story.

Oh yes, and then there was this part with functions and subqueries where it fed me what to input into the expression and that was a bit terrifying. I wouldn’t know what those inputs would be if trying to apply this knowledge to a different use case. So perhaps there is no necessary coding involved (unless you want to make applications), but I would certainly suggest understanding functions and maybe some statistical regression.

In every step of the walkthrough, they provide links to documentation detailing how to make models, wrangle data, do experimentation, start building applications… it’s a pretty impressive library of UI and API docs. I’m sure they would have been more useful to me if I understood the language better. It’s a major roadblock in execution when you don’t understand the language or have contextual knowledge.

Experimenting

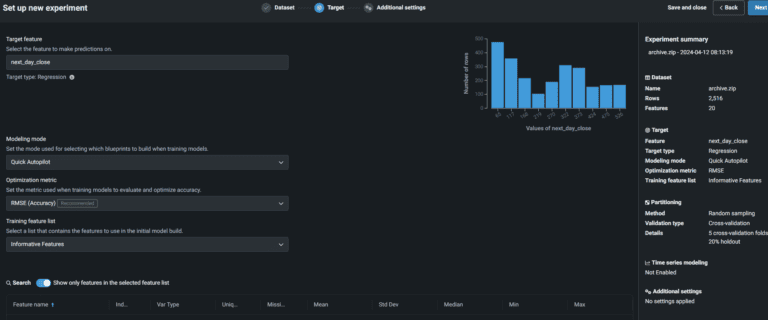

I went to Kaggle to download some cleaned data for experimentation. I chose a dataset from business stocks with an objective of predicting the next day close amount on the stock. In this case, I at least wouldn’t need to clean any data, and the objective was clear for a “quick” experiment.

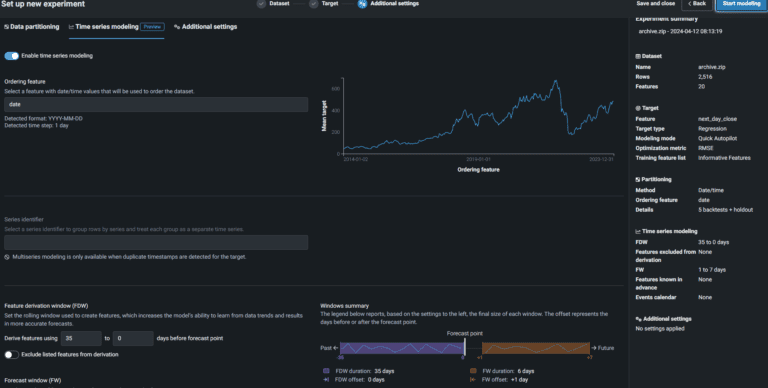

I began uploading the data, chose my target “feature” (which was the “next day close”), and I generally just went with what the platform recommended . It also defaulted my model to a regression model given the dataset I fed it and the target feature; I did not seem to have an option to change this to a different model should I choose – not sure why.

Next, I selected the time-series modeling so I could see how the model might predict the “next day close” amount to change over a period of time. At no point in my experiment did I come across “wrangling data” nor a need to insert operations and recipes – all words I am unfamiliar with in this context but steps I remembered from the walkthrough. I’m not sure why this process isn’t generally the same, or if it was a user error on my part.

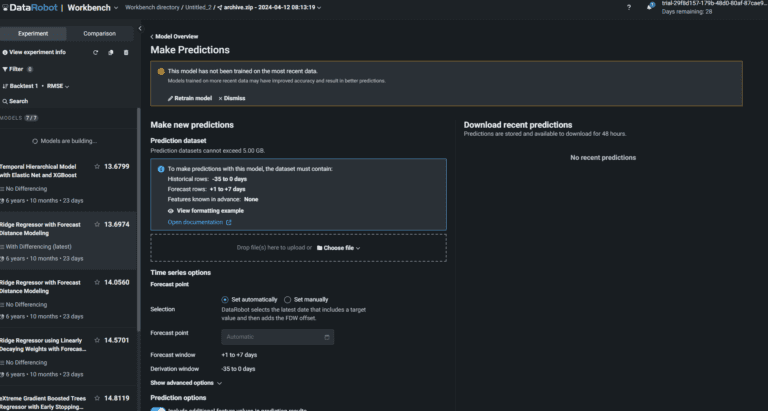

Then I hit my snag: The platform began building models and I had no clarity as to which one to choose or why. Besides that, I had no context as to why it was building multiple models anyway. I chose one of the models and selected “make prediction”. However, for every model built for me, I got the same answer which was that the model had not been trained on the data. I do not yet know how to train models.

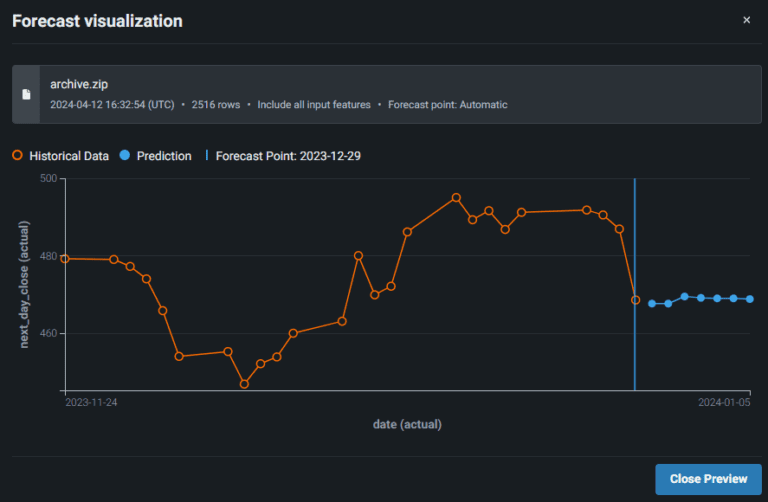

I proceeded to feed it the same data again using the automatic presets. I’m not sure if I should have pruned my features or included prediction intervals. This was the visualization I was given:

I uploaded the data again and did the same thing to see if for some reason I would get a different answer; it remained the same.

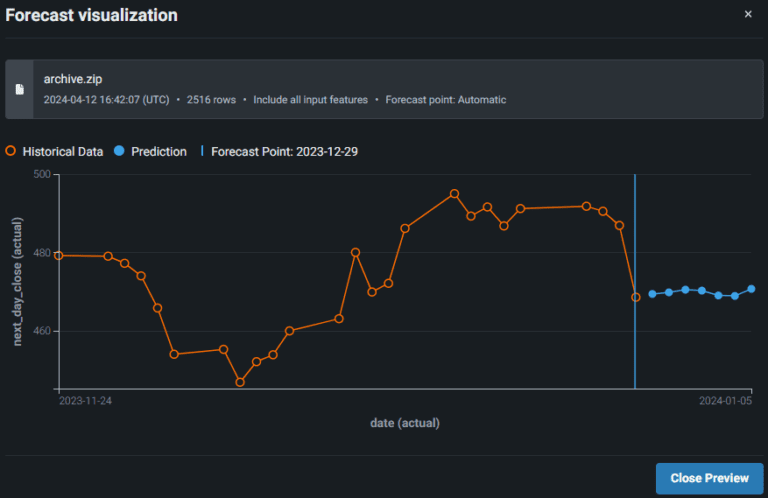

I uploaded the data to a different model that had been built, and I got slightly different results:

I do not currently have the education to determine which model is more accurate than the other, so this is where my experiment ends.

Conclusion

I wish this platform was as easy for me as my high-level knowledge suggested, but I hadn’t expected it to be. I got further into it than I had anticipated, but I wish I understood the process and language better, why which functions and selections, what the results meant, and how to optimize.

DataRobot does, however, mitigate this lack of knowledge with DataRobot University. At the time of writing this, it’s $300/year for access to all courses, including a business professional track and a technical professional track.

I haven’t compared the cost for this education against competitors, but it seems cost-effective to me – perhaps because its teaching is focused on using only its platform. Before I choose how to continue my education, I’d want to know how to get the most knowledge for my money, and I’d want to feel confident that this platform is worth being certified to use over another platform certification.

Ultimately, I cannot conclude that a platform like DataRobot is easy enough for any marketer to use for predictive analytics. I currently believe it’s necessary to brush up on some statistical regression, familiarize yourself with the language, and have a more advanced understanding of functions – I’m not sure where to even start on the training and optimizing of models though, so that’s why a little schooling seems reasonable first.

Let me know if you found this blog useful! I personally find it illuminating to read and watch through other professionals’ learning processes. Maybe I saved you some time. Maybe you have a tip for me? I would love to hear if you do!